TMTB Morning Wrap

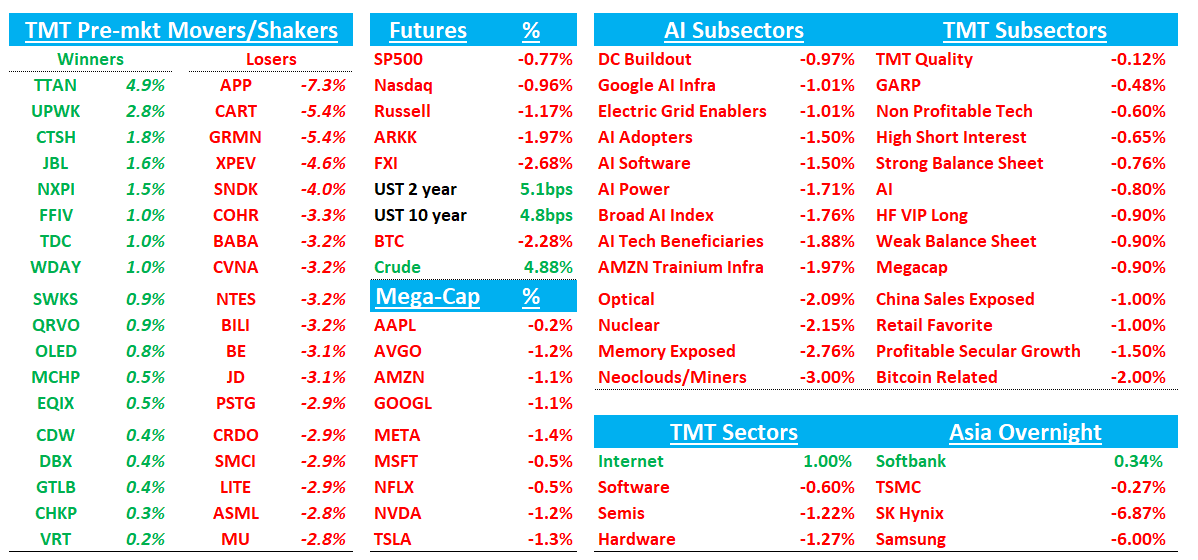

Good morning. QQQs -1% as investors continue to be nervous about developments in Iran. Not a ton of news overnight, but not any good news either as both sides still remain very far apart although it’s clear Trump looking for an off ramp. Oil up another 5% again as Brent continues to bounce off $100. Yields popping another 5bps across the curve. The nice green in Tech yesterday was nice, but now back to the Iran reality. Asia generally red overnight: TPX -0.22%, NKY -0.27%, Hang Seng -1.89%, HSCEI -2.25%, SHCOMP -1.09%, Shenzhen -1.46%, Taiwan TAIEX -0.3%, Korea KOSPI -3.22%, BTC -2%.

Lots to get to in Tech so let’s get to it…

QCOM: Bernstein Downgrades to Market Perform, Cuts PT to $140 — “Doing Everything Right, but Living in a Bad Neighborhood”

Bernstein downgrades Qualcomm from Outperform, cutting its target from $175 to $140 (14x FY27 EPS), arguing surging mobile memory prices — LPDDR up 3x YoY, NAND up ~60% — will likely drive double-digit smartphone unit declines and further pressure numbers that already "still look too high" given what the firm calls "astonishingly lazy" sell-side modeling of the Apple roll-off (AAPL share stepping from 80% to 20%, ~$1.50 EPS impact). The stock is cheap but Bernstein notes that's "not a reason to own in semis" when "undisputed AI datacenter winners like NVIDIA or AVGO" trade at 15x or less.

Memory/MU/SNDK: TurboQuant Reignites Efficiency Debate, But FundaAI Sees Demand Expansion Intact

FundaAI notes KV cache compression is an inference-side optimization that reduces working memory footprint, not overall storage demand, and instead enables longer context, higher batch sizes, and greater concurrency. The firm adds TurboQuant is not a new breakthrough and lacks proof at frontier-scale deployment, with indications that scaling beyond ~100B parameter models introduces meaningful challenges. More importantly, FundaAI says efficiency gains are typically reinvested—consistent with Jevons Paradox—as lower costs per query drive expansion in long-context and agent-driven workloads. Net, FundaAI views this as supportive of sustained memory demand, with savings likely redeployed into “more state, longer context, and higher concurrency,” rather than signaling structural demand erosion.

SNDK: BofA Reiterates Buy; AI Inference Demand Firm, TurboQuant a Net Positive

BofA says investor meetings with SNDK’s CFO reinforced confidence in durable NAND demand from AI inference and hyperscalers, with no incremental capacity beyond high-teens supply growth through 2026/27. The analyst notes rising interest in long-term supply deals with hybrid pricing, supporting improved visibility and reduced cyclicality, alongside mix shift toward higher-margin SSDs into CY26+. On TurboQuant, BofA says efficiency gains should boost hyperscaler ROI and drive incremental demand, not reduce it. Maintains Buy and $900 PO (~10x CY27 EPS). On China, BofA said YMTC is mostly serving demand for NAND within China, and SNDK does not see it as a meaningful shipper of NAND into the U.S.

MU/SNDK: Morgan Stanley Reiterates Overweight, Says Memory Selloff Overdone as AI Demand Makes This Cycle Different

Morgan Stanley argues the recent memory selloff is a “healthy pricing in of durability concerns” but that bears are applying the wrong framework, because “memory is increasingly THE primary constraint on AI demand” — not just a beneficiary of it. The firm notes AI is consuming so much DRAM it’s crowding out PC and smartphone supply, cloud customers are paying expedite premiums, and HBM4 complexity with Rubin Ultra will absorb new capacity. On Google’s TurboQuant KV cache optimization that spooked the group, Morgan Stanley calls it “an evolutionary development, with basically no surprises for memory,” noting the 6x gain applies only to KV cache, not total memory and says there is no evidence demand is going down “universally across our contacts”

ARM: Stratechery interviews Rene Haas, CEO of ARM

ARM CEO Rene Haas discussed the company's historic shift from pure IP licensing to designing and selling its own chips, starting with a custom processor for Meta. The decision emerged in mid-2025 when Meta approached Arm to complete a Compute Subsystem design rather than finish it themselves, leading Arm to enter the fabless chip market. Haas explains that CPUs are critical to AI infrastructure for token distribution and orchestration, positioning Arm's energy-efficient processors to complement rather than compete with Nvidia's offerings in data center deployments. The company faces execution risks including supply chain constraints (particularly memory bandwidth) and operational challenges new to its business model, but has hired experienced chip industry veterans to manage the transition.

On Agentic AI Driving a step function increase in CPU core demand:

“I have a belief that each one of these cores will want to potentially run their own agent, launch a hypervisor job, launch a job that can be run independently, launch it, get the work done, go to sleep. The performance of the core is going to matter, no doubt about it, but I think the efficiency of that core is probably going to matter just as much as the performance... CSSs with greater than 128 cores in the implementation? Absolutely. Do I think, could I see 256? Absolutely. Could I see 512? Possibly.”

On Memory:

"I'm probably less worried about [TSMC capacity] at the moment than I am about memory. I think that the business, the demand is very, very high actually for the chip, and through our partner, we're able to secure upside through TSMC, that has not been a problem. But memory is quite challenging and I think if there's any limit to how big this business can get... if there was more memory could we sell more? Yes... First off, obviously HBM being such a capacity hog, and then people moving from LP into HBM at the memory guys, then compounding on it, all of the explosion of the CPU demand drives up memory demand. So it all kind of adds on to itself, which makes the memory problem pretty acute."

ARM: Needham Upgrades to Buy, PT $200; Silicon Strategy Drives AI Upside

Needham upgrades ARM to Buy with a $200 PT, saying its push into silicon, higher royalty rates, and expansion into subsystems are starting to pay off and reposition the company as a credible AI play. The analyst notes the new AGI CPU launch—targeting hyperscale and enterprise workloads with strong early customer traction (incl. Meta and OpenAI)—marks a key step into the silicon market. Needham adds near-term COT risk from hyperscalers remains but is mitigated by multi-year contracts and execution challenges in in-house chip design, supporting limited risk over the next 2–3 years. Longer term, the firm sees a path to ~$15B in silicon revenue by CY30 with margins expanding to 50%+ as ARM transitions to full COT, underpinning ~$9 EPS potential.

GOOGL: Evercore ISI Reiterates Outperform/$400 PT, Proprietary Survey Shows Search Position Holding Firm Despite AI Disruption Fears

Keep reading with a 7-day free trial

Subscribe to TMT Breakout to keep reading this post and get 7 days of free access to the full post archives.