TMTB EOD Wrap: Altman, Broadcom (AVGO) & Citrini's Optical Piece as Oil/Iran remains main focus

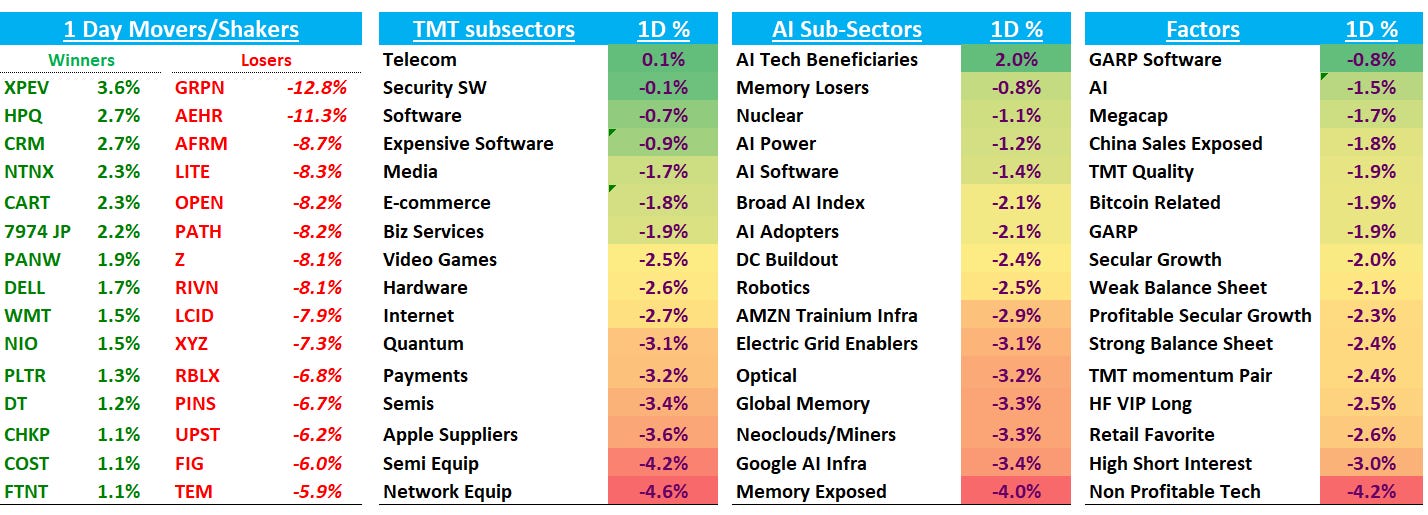

Good afternoon. QQQs -1.7% as investors grow a bit more worried about what’s going in Iran and is hoping for an off-ramp from Trump sooner rather than later. Media reporting suggesting WH finds it political intolerable for oil to stay at present levels for much longer given Brent just popped above $100 again (+11%). 2 years popped 8bps while longer end of the curve was only up 3bps. Market is now only pricing in 20bps worth of cuts this year as fed expects continue to move in a more hawkish direction with oil’s rise — a more hawkish shift never good for risk.

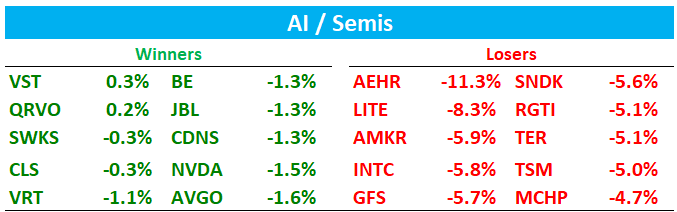

In Tech, investors just wish they could focus on GTC instead of WW3. Lots of red across the board with semis underperforming software by close to 300bps with weakness in Optical (more on that below) while hardware outperformed. From a factor perspective: non-profitable/highly shorted names led the way lower while GARP and Megacap outperformed as investors looked for a bit of safety and away from beta.

Post-close ADBE -6% as they beat (largest beat since ‘21) but ARR just in line with street and Q2 guide slightly above while re-iterating their FY26 outlook. CEO Shantu Narayen is out.

Let’s get to the good stuff…

AI / SEMIS

Altman was in a suit being interview by DRM News yesterday. Video here. Key quotes:

On Tokens as Utility:

"Fundamentally, our business — and I think the business of every other model provider — is going to look like selling tokens. We see a future where intelligence is a utility like electricity or water and people buy it from us on a meter and use it for whatever they want. The demand that we see for that seems like it's going to continue to just go like this."

On efficiency:

“Our first reasoning model was called o1, came out like 16 months ago. Our latest model where we've now integrated reasoning is GPT-5.4. To get the same answer to a hard problem from that first model to 5.4 has been a reduction in cost of about 1,000x. We are still so early in this paradigm. We have so much more to gain about our understanding of how to develop these models and train them and run them efficiently."

"I got to India and someone handed me a briefing sheet and it was like codex usage in India has 10x-ed in some small number of months and I was like, that's got to be a bug. But it was true. It's an even stronger version of what's happening in the US. People saying, 'I'm trying to build a zero-person startup. I'm trying to just write a prompt that's going to make my whole startup — write my software, do my customer support, do my legal stuff — and then I want to go on vacation.'"

On productivity and hiring:

“It used to be that when we would talk to startups, they would talk about how many employees they needed. Now they generally don’t want to hire a lot. They think that’ll slow them down and they’re all focused on how much compute they can get. ‘Can I reserve this much capacity? Can I do a cloud deal for that? Can I get this many tokens?’ Bigger companies are going through that mental shift more slowly, but some are starting to — engineering orgs and product orgs talking about they’re doubling, tripling what they’re planning to ship this year. That has not happened before.”

"I can see a world where we have an incredible productivity boom, quality of life goes up and up, most of the things we say we care about get better and better, and yet GDP and the way we currently measure it goes down and down — like deflation for a very long period of time. I don't know what it means to live in a forever deflationary world."

AVGO -1.6% continues to outperform following earnings: in Altman’s interview he said he’ll get his ASIC deployed at scale by the end of this year and will get the first chips back in a few months. Key quotes:

We should have the first chips deployed at scale by the end of this year. We should have the first chips back just in a few months now. And it looks like it’ll be really good.”

"The chip we're doing is inference only. The thinking behind it is that a specialized chip — to be not necessarily the fastest inference chip, but the cheapest inference chip, the most efficient per watt given the constraints we see in front of us — is going to be important for all of the agent demand we see in the future. It's an opinionated bet. It's a limited chip, but in a world where we're energy constrained, I think it will be very important."

That news came on the back of META’s PR saying 4 MTIA chips in 2 years.

Main story today in AI semis was in Optical around Citrini’s Optical piece. LITE -8% / COHR -4%…Key quote from Cit:

“The first part of the trade, getting long the “in-your-face” winners like Lumentum and Coherent, has paid handsomely. While these companies should see their shares continue to perform well, they’re also approaching a level of “priced in” that isn’t readily apparent in the rest of the supply chain.”

AAOI -17% was mentioned in a more negative light saying their mid-2027 targets require 5x current capacity on CPO technology they've never shipped, and if NVDA’s CPO timeline holds, the merchant pluggable layer could get crowded before AAOI's CPO revenue becomes material.

Irrational Analysis chimed in:

Keep reading with a 7-day free trial

Subscribe to TMT Breakout to keep reading this post and get 7 days of free access to the full post archives.